How to Reduce ElevenLabs TTS Latency in Voice AI Calls (2026 Guide)

A practical guide to minimizing ElevenLabs text-to-speech latency in production voice AI — model selection, streaming optimization, connection pre-warming, and Twilio silence threshold tuning.

TL;DR — The short answer

- 1

eleven_flash_v2_5 is the correct default model for real-time conversational voice AI in 2026 — its p50 first-chunk latency of 75–150ms is 2–4x faster than turbo and 5–8x faster than multilingual_v2.

- 2

Streaming mode is not optional for production voice AI — non-streaming adds 400–800ms of unnecessary latency for typical response lengths and makes silence-detection-induced drops significantly more frequent.

- 3

Twilio's silence_timeout should be set to p95 TTS latency plus a 2-second buffer; a timeout below 5 seconds with any ElevenLabs model will produce measurable silent failure rates at production scale.

- 4

Connection pre-warming eliminates 80–200ms of cold-start overhead and is the single highest-ROI latency optimization that requires zero changes to your TTS pipeline or model selection.

ElevenLabs model benchmarks: flash vs turbo vs multilingual

Streaming optimization: why most teams configure it wrong

voice.chunkPlan setting is configured appropriately for your latency target. Vapi's default chunk plan accumulates tokens before sending to ElevenLabs to improve prosody — this adds 100–300ms to first-chunk latency in exchange for more natural speech rhythm. For maximum speed, set the chunk plan to send on first token. For Retell, the equivalent setting is the response_delay parameter.Connection pre-warming: eliminating cold-start latency

Twilio silence_timeout tuning for ElevenLabs response times

inactivity_timeout parameter on the Measuring p50/p95 latency in production: instrumentation patterns

first_chunk_latency = first_chunk_at - request_sent_at. This is the metric you care about for silence timeout tuning. total_generation_time = last_chunk_at - request_sent_at is useful for cost analysis (it correlates with character count) but not for latency configuration.{call_sid, elevenlabs_session_id, model, input_char_count, first_chunk_latency_ms, total_generation_ms, timestamp}. Index on timestamp and model for efficient percentile queries.Advanced: request batching and input length optimization

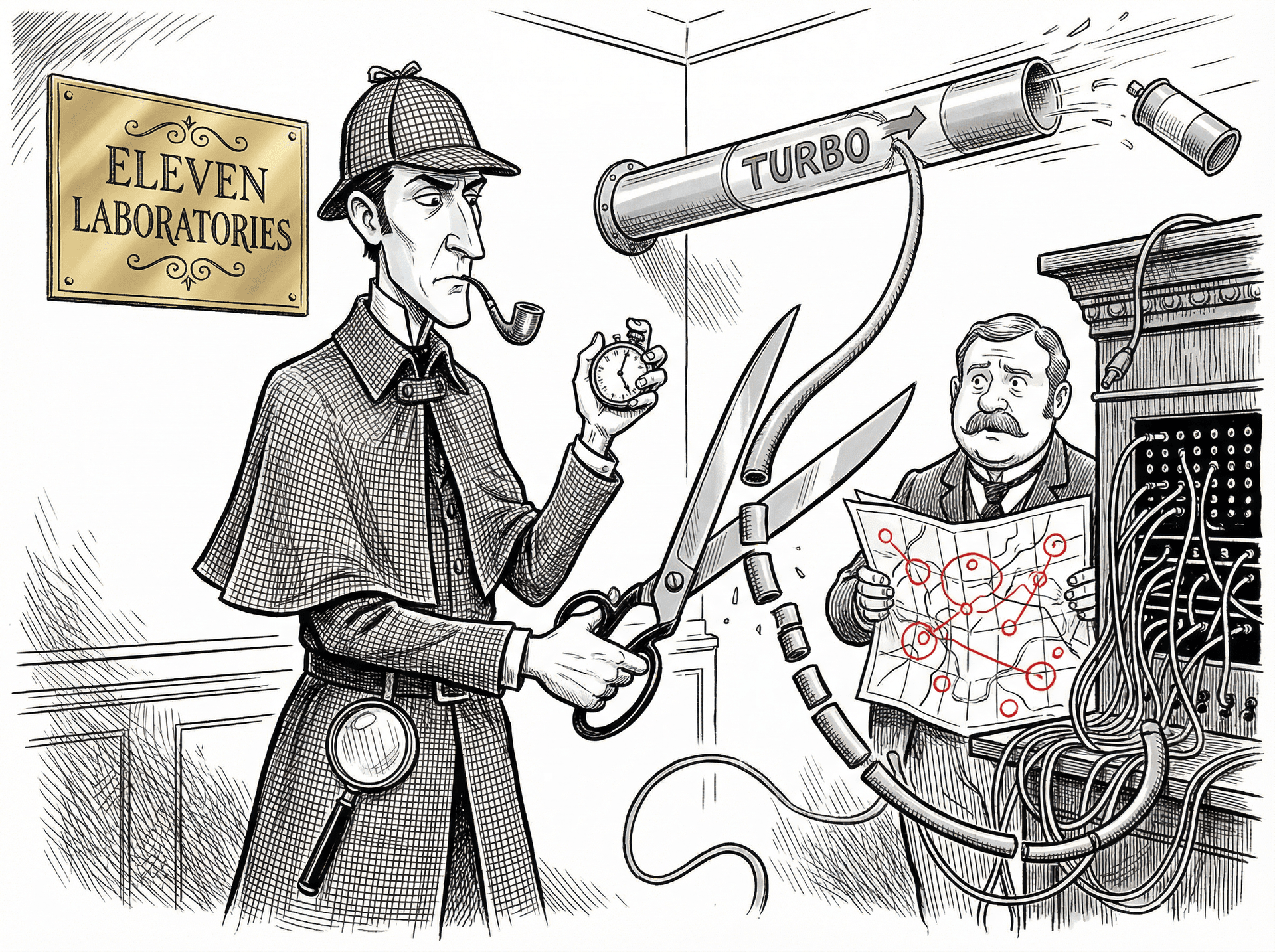

., !, ? followed by whitespace), accumulate tokens into a sentence buffer, and flush to ElevenLabs when a boundary is detected. Queue subsequent sentences for synthesis while the first is playing. The complexity is in managing the queue — if the second sentence synthesizes faster than the first plays, you can begin streaming it immediately; if it is slower, you need a brief gap strategy (a pause, a filler phrase, or silence) to avoid jarring interruptions.How Sherlock surfaces ElevenLabs latency spikes automatically

See how Sherlock compares

Frequently asked questions

What is normal ElevenLabs TTS latency in production?

Under normal API load, eleven_flash_v2_5 produces first-chunk latency of 75–150ms for inputs under 100 characters when streaming is enabled. eleven_turbo_v2_5 runs 200–350ms for the same input size. eleven_multilingual_v2, the highest-quality model, averages 400–700ms first-chunk latency. These are p50 figures — at p95 during peak load periods, add 30–60% to each. Your effective latency budget also includes the WebSocket roundtrip to your server (15–40ms typical on a well-located server) and Twilio's media processing overhead (30–80ms). A realistic end-to-end p50 for flash with streaming enabled is 250–350ms measured from the moment the LLM generates the last token to the moment audio starts playing on the caller's handset.

How do I choose the right ElevenLabs model for a voice AI call?

For real-time conversational AI where caller experience depends on fast response, eleven_flash_v2_5 is the correct default in 2026 — it has the lowest latency of any available model and sufficient quality for speech comprehension. Reserve eleven_turbo_v2_5 for use cases where the slight quality improvement is worth 150–200ms of additional first-chunk latency — for example, high-stakes enterprise calls where voice quality signals credibility. Avoid eleven_multilingual_v2 in real-time conversation paths unless you require non-English language support that flash does not cover; its latency profile makes it unsuitable for low-silence-threshold configurations. When your voice AI runs through Vapi or Retell, these platforms expose their own model selection settings — ensure you are setting ElevenLabs model selection at the platform level, not relying on platform defaults, which may lag behind ElevenLabs' latest model releases.

What silence timeout should I configure in Twilio for voice AI?

The correct silence timeout depends directly on your p95 end-to-end TTS latency. Measure p95 TTS latency for your specific model and input-length distribution over at least 1,000 calls. Your silence_timeout should be set to p95_latency + 2,000ms minimum — giving your AI agent time to generate and begin streaming audio even on a slow tail-latency call. A common production configuration for eleven_flash_v2_5 with streaming enabled is silence_timeout=7 seconds. Setting it below 5 seconds with any ElevenLabs model other than flash is likely to produce silence-detection-induced call drops on p95+ calls, which will show in your logs as 'completed' calls with anomalously short duration.

When should I use ElevenLabs streaming vs non-streaming?

Always use streaming for real-time voice AI calls. Non-streaming mode requires ElevenLabs to generate the complete audio file before returning any bytes — for a 200-character response this adds 400–800ms of latency on top of the generation time. Streaming mode begins returning audio chunks as soon as the first phoneme is synthesized, typically within 75–150ms of request submission for flash. The only case to consider non-streaming is when you need the complete audio duration before the call (for example, to mix it with background audio or to generate a time-synchronized transcript). For any configuration where the caller is waiting for the AI response in real time, streaming is mandatory for acceptable latency.

Does connection pre-warming actually reduce ElevenLabs latency?

Yes, measurably. ElevenLabs WebSocket connections include a TLS handshake and HTTP upgrade that adds 80–200ms to the first request on a cold connection. By establishing the WebSocket connection 500ms before you expect to need it — at the moment the inbound call arrives, for example, rather than at the moment the LLM first responds — you eliminate this cold-start overhead from the latency-critical path. Connection pre-warming is most impactful when your call volume is low enough that connections cannot be pooled (fewer than 20 concurrent calls). Above 20 concurrent calls, maintaining a warm connection pool sized to your concurrency level produces the same result more efficiently.

Ready to investigate your own calls?

Connect Sherlock to your voice providers in under 2 minutes. Free to start — 100 credits, no credit card.